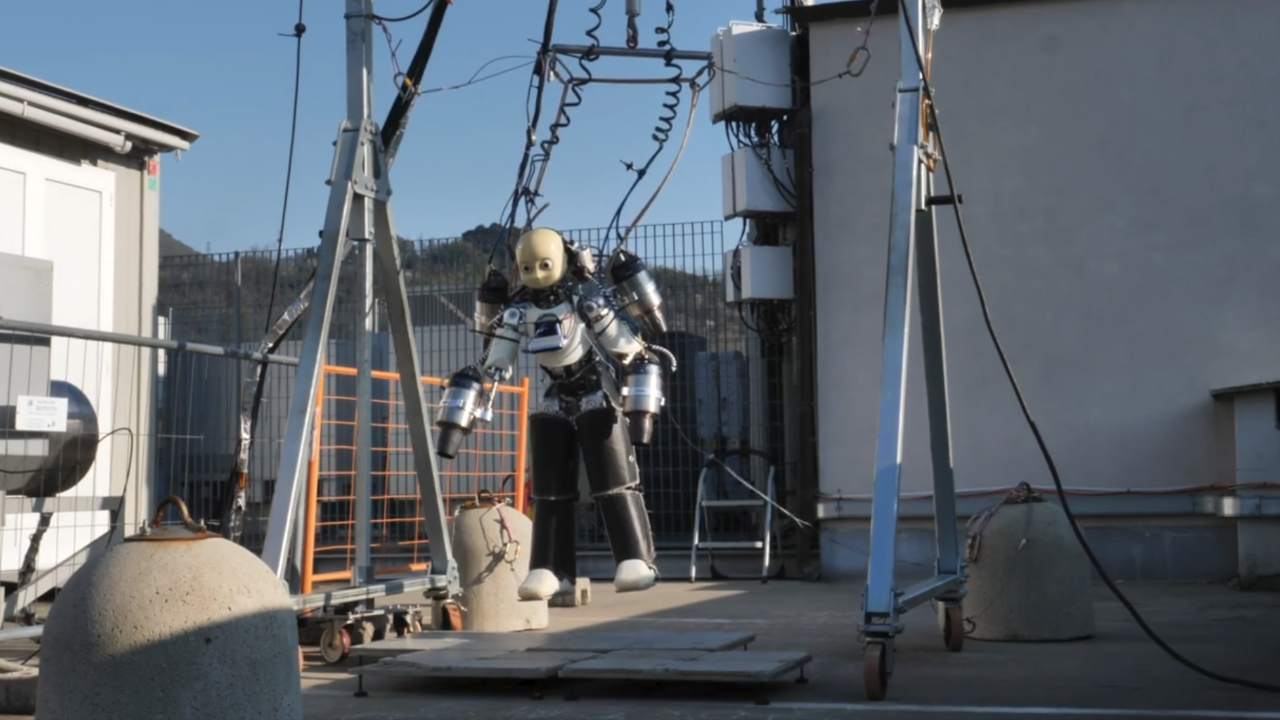

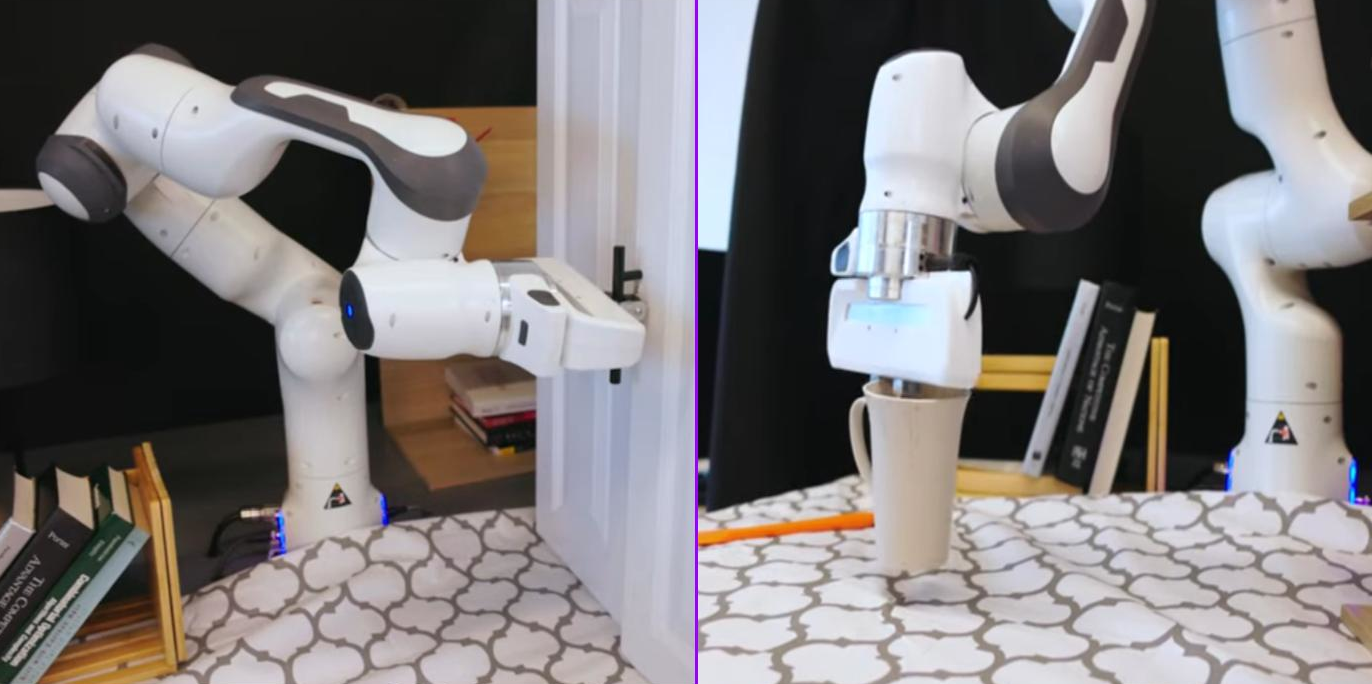

Some refer to the process of operating robots remotely via virtual reality as “machine embodiment” (or avatar control), which stands out as one of the most exciting and advanced technologies in modern robotics.

In this process, a human operator controls a robot (typically a humanoid) remotely and in real-time. The operator wears a VR headset along with controllers on their hands, waist, and legs to accurately record their movements in 3D space, which are then transmitted directly to the robot. The robot simulates the operator’s movements with extreme precision, creating an engineering state akin to a human inhabiting the machine’s body.

Cameras mounted on the robot’s head broadcast a live, stereoscopic view directly to the headset’s screen, visually placing the operator in the robot’s actual environment and giving them the sensation of standing in its place. Meanwhile, artificial intelligence acts as a mediator to address the physical differences between the human and the robot through a smart motion translation process. Algorithms adapt the human’s instantaneous movements to fit the robot’s dimensions and physics, ensuring its stability and balance according to its physical limitations. Commands are then sent to the micro-motors in the robot’s joints to execute the physical movement in fractions of a second.

From Human Control to Autonomous Operation

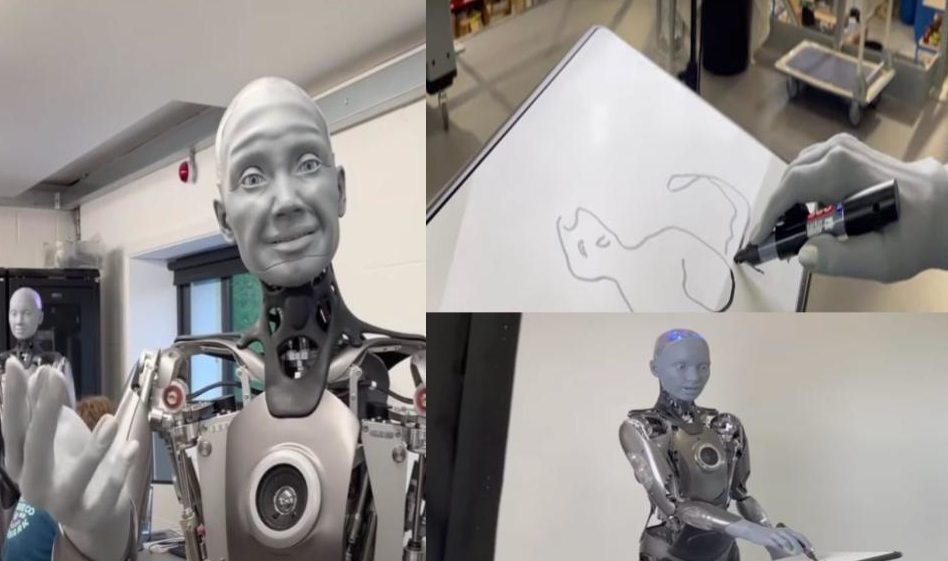

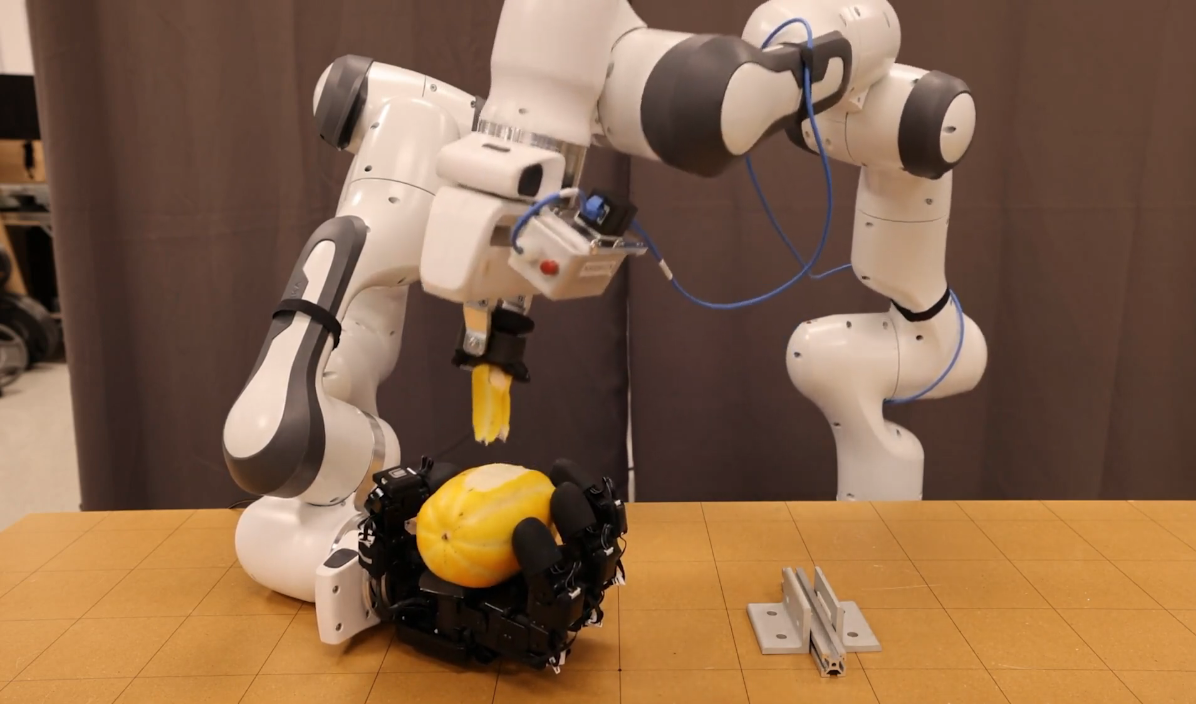

The significance of this technology goes far beyond merely remote-controlling robots to perform tasks in hazardous environments like nuclear reactors; it has become the cornerstone for training the next generation of robotic AI. Instead of spending thousands of hours writing complex code to teach a robot how to fold laundry or stack dishes, the human operator wears VR gear and performs the task themselves through the robot’s body.

During this practical exercise, the robot’s sensors record massive amounts of precise data regarding movement angles, the amount of force exerted, and the nature of interactions with surrounding objects. This rich data is used as training examples for kinesthetic AI models to learn patterns and understand context. With repetition, the robot gains the necessary experience to transition from human-guided operation to fully autonomous operation, eventually becoming capable of recognizing its environment and executing these complex tasks independently, without any human intervention.

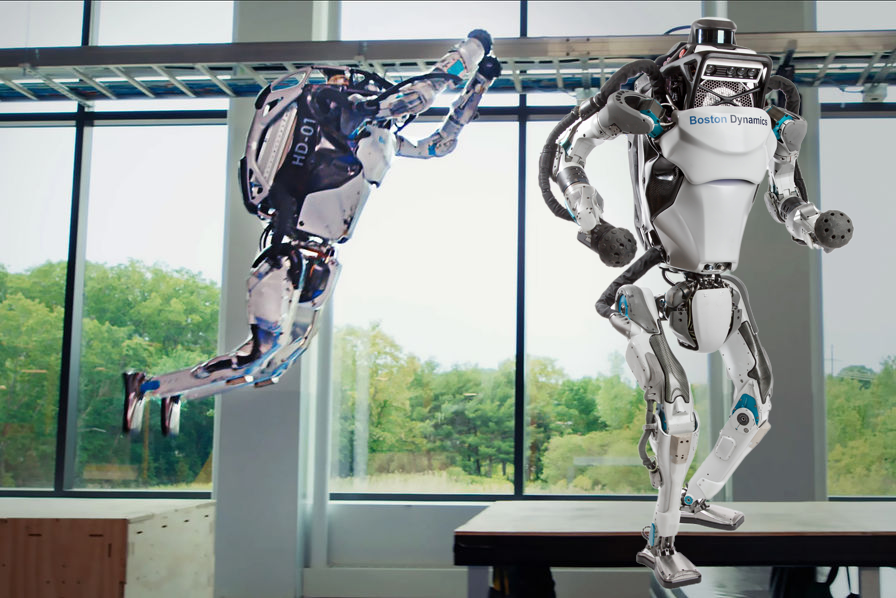

The astonishing progress we see in the robotics world today is a direct result of these technologies. The advanced robots currently dominating the scene, such as the G1 models and other pioneers that dazzle us with their fluid, complex movements — like synchronized dancing, rapid jumping, backflips, or delicate motor tasks — owe a massive debt to motion capture and teleoperation technologies. Instead of relying on manual programming, which historically made machine movements slow and rigid, this approach allows for the seamless transfer of intuitive “human motor intelligence” into neural networks. When this massive trove of human data is combined with smart simulation environments that train the robot millions of times at accelerated speeds, it gives birth to this new generation of machines, capable of adapting and moving with unprecedented agility.